Electrical engineer Gilbert Herrera was appointed research director of the US National Security Agency in late 2021, just as an AI revolution was brewing inside the US tech industry.

The NSA, sometimes jokingly said to stand for No Such Agency, has long hired top math and computer science talent. Its technical leaders have been early and avid users of advanced computing and AI. And yet when Herrera spoke with me by phone about the implications of the latest AI boom from NSA headquarters in Fort Meade, Maryland, it seemed that, like many others, the agency has been stunned by the recent success of the large language models behind ChatGPT and other hit AI products. The conversation has been lightly edited for clarity and length.

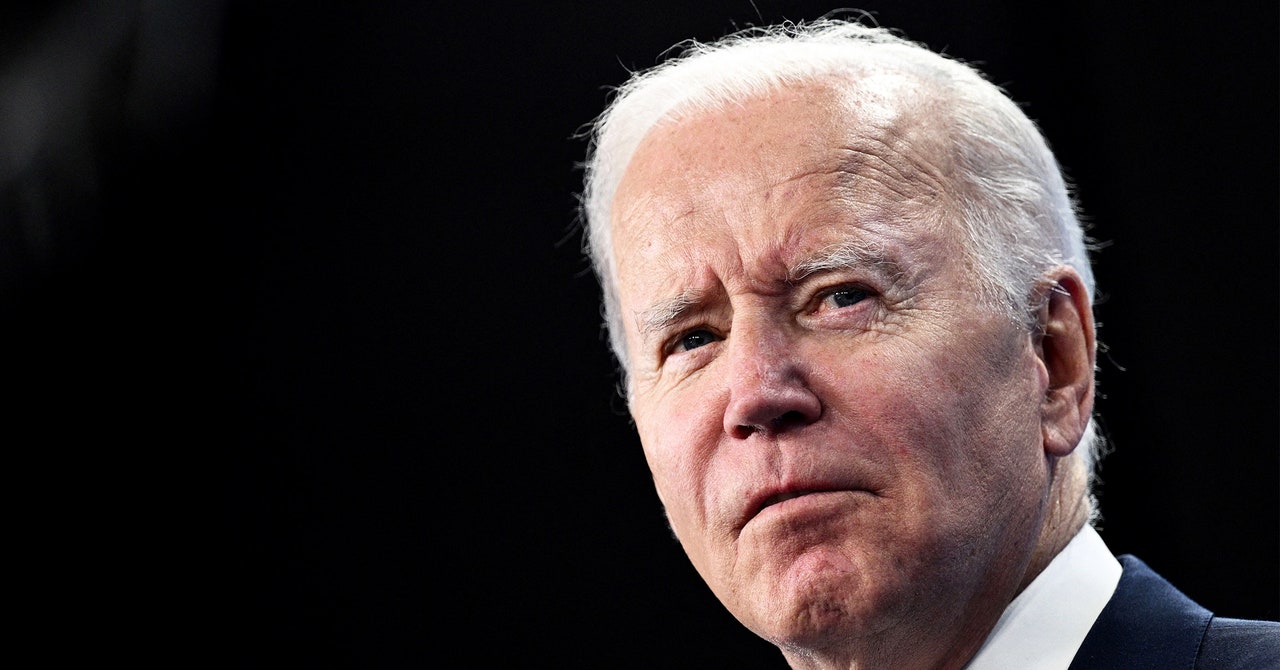

Gilbert HerreraCourtesy of National Security Agency

How big of a surprise was the ChatGPT moment to the NSA?

Oh, I thought your first question was going to be “what did the NSA learn from the Ark of the Covenant?” That’s been a recurring one since about 1939. I’d love to tell you, but I can’t.

What I think everybody learned from the ChatGPT moment is that if you throw enough data and enough computing resources at AI, these emergent properties appear.

The NSA really views artificial intelligence as at the frontier of a long history of using automation to perform our missions with computing. AI has long been viewed as ways that we could operate smarter and faster and at scale. And so we’ve been involved in research leading to this moment for well over 20 years.

Large language models have been around long before generative pretrained (GPT) models. But this “ChatGPT moment”—once you could ask it to write a joke, or once you can engage in a conversation—that really differentiates it from other work that we and others have done.

The NSA and its counterparts among US allies have occasionally developed important technologies before anyone else but kept it a secret, like public key cryptography in the 1970s. Did the same thing perhaps happen with large language models?

At the NSA we couldn’t have created these big transformer models, because we could not use the data. We cannot use US citizen’s data. Another thing is the budget. I listened to a podcast where someone shared a Microsoft earnings call, and they said they were spending $10 billion a quarter on platform costs. [The total US intelligence budget in 2023 was $100 billion.]

It really has to be people that have enough money for capital investment that is tens of billions and [who] have access to the kind of data that can produce these emergent properties. And so it really is the hyperscalers [largest cloud companies] and potentially governments that don’t care about personal privacy, don’t have to follow personal privacy laws, and don’t have an issue with stealing data. And I’ll leave it to your imagination as to who that may be.

Doesn’t that put the NSA—and the United States—at a disadvantage in intelligence gathering and processing?

II’ll push back a little bit: It doesn’t put us at a big disadvantage. We kind of need to work around it, and I’ll come to that.

It’s not a huge disadvantage for our responsibility, which is dealing with nation-state targets. If you look at other applications, it may make it more difficult for some of our colleagues that deal with domestic intelligence. But the intelligence community is going to need to find a path to using commercial language models and respecting privacy and personal liberties. [The NSA is prohibited from collecting domestic intelligence, although multiple whistleblowers have warned that it does scoop up US data.]