![]()

Download a PDF of the paper titled Dynamics of Instruction Tuning: Each Ability of Large Language Models Has Its Own Growth Pace, by Chiyu Song and 5 other authors

Download PDF

HTML (experimental)

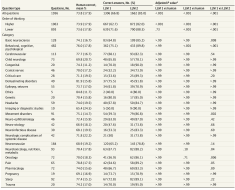

Abstract:Instruction tuning is a burgeoning method to elicit the general intelligence of Large Language Models (LLMs). However, the creation of instruction data is still largely heuristic, leading to significant variation in quantity and quality across existing datasets. While some research advocates for expanding the number of instructions, others suggest that a small set of well-chosen examples is adequate. To better understand data construction guidelines, our research provides a granular analysis of how data volume, parameter size, and data construction methods influence the development of each underlying ability of LLM, such as creative writing, code generation, and logical reasoning. We present a meticulously curated dataset with over 40k instances across ten abilities and examine instruction-tuned models with 7b to 33b parameters. Our study reveals three primary findings: (i) Despite the models’ overall performance being tied to data and parameter scale, individual abilities have different sensitivities to these factors. (ii) Human-curated data strongly outperforms synthetic data from GPT-4 in efficiency and can constantly enhance model performance with volume increases, but is unachievable with synthetic data. (iii) Instruction data brings powerful cross-ability generalization, as evidenced by out-of-domain evaluations. Furthermore, we demonstrate how these findings can guide more efficient data constructions, leading to practical performance improvements on two public benchmarks.

Submission history

From: Chiyu Song [view email]

[v1]

Mon, 30 Oct 2023 15:37:10 UTC (10,991 KB)

[v2]

Thu, 22 Feb 2024 13:21:27 UTC (3,751 KB)