Vice President Harris announced during her speech in London that the declaration has now been signed by US-aligned nations that include the UK, Canada, Australia, Germany, and France. The 31 signatories do not include China or Russia, which alongside the US are seen as leaders in the development of autonomous weapons systems. China did join with the US in signing a declaration on the risks posed by AI as part of the AI Safety Summit coordinated by the British government.

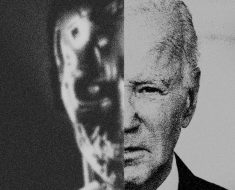

Deadly Automation

Talk of military AI often evokes the idea of AI-powered weapons capable of deciding for themselves when and how to use lethal force. The US and several other nations have resisted calls for an outright ban on such weapons, but the Pentagon’s policy is that autonomous systems should allow “commanders and operators to exercise appropriate levels of human judgment over the use of force.” Discussions around the issue as part of the UN’s Convention on Certain Conventional Weapons—established in 1980 to create international rules around the use of weapons deemed to be excessive or indiscriminate in nature—have largely stalled.

The US-led declaration announced last week doesn’t go so far as to seek a ban on any specific use of AI on the battlefield. Instead, it focuses on ensuring that AI is used in ways that guarantee transparency and reliability. That’s important, Kahn says, because militaries are looking to harness AI in a multitude of ways. Even if restricted and closely supervised, the technology could still have destabilizing or dangerous effects.

One concern is that a malfunctioning AI system might do something that triggers an escalation in hostilities. “The focus on lethal autonomous weapons is important,” Kahn says. “At the same time, the process has been bogged down in these debates, which are focused exclusively on a type of system that doesn’t exist yet.”

Some people are still working on trying to ban lethal autonomous weapons. On the same day Harris announced the new declaration on military AI, the First Committee of the UN General Assembly, a group of nations that works on disarmament and weapons proliferation, approved a new resolution on lethal autonomous weapons.

The resolution calls for a report on the “humanitarian, legal, security, technological, and ethical” challenges raised by lethal autonomous weapons and for input from international and regional organizations, the International Committee of the Red Cross, civil society, the scientific community, and industry. A statement issued by the UN quoted Egypt’s representative as saying “an algorithm must not be in full control of decisions that involve killing or harming humans,” following the vote.