Explore LLM quantization and run GGUF files in ctransformers

Source link

Run Large Language Models On A Budget: Model Quantization And GGUF For Efficient GPU-Free Operation

You may also like

Application of Convex Hypersurfaces part9(Machine Learning 2024)

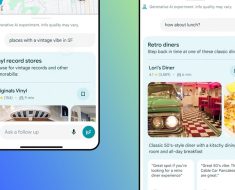

Google Maps is getting a generative AI boost. Here’s what that will look like

How will generative AI affect digital investigations and e-discovery?

arrays – Is it possible to map our computer directories to a dictionary in Python

Iit Delhi: IIT Delhi to propel professionals into the Quantum Future with Certification in Quantum Computing & Machine Learning