@inproceedings{wang-li-2023-learning,

title = "Learning from Mistakes via Cooperative Study Assistant for Large Language Models",

author = "Wang, Danqing and

Li, Lei",

editor = "Bouamor, Houda and

Pino, Juan and

Bali, Kalika",

booktitle = "Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing",

month = dec,

year = "2023",

address = "Singapore",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2023.emnlp-main.659",

doi = "10.18653/v1/2023.emnlp-main.659",

pages = "10667--10685",

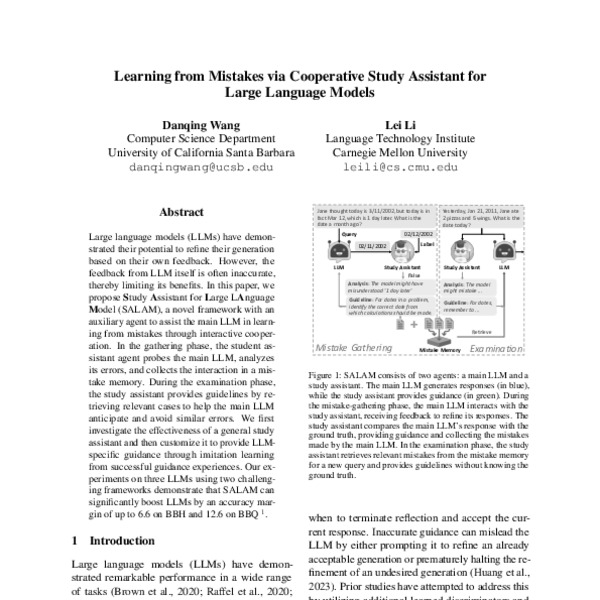

abstract = "Large language models (LLMs) have demonstrated their potential to refine their generation based on their own feedback. However, the feedback from LLM itself is often inaccurate, thereby limiting its benefits. In this paper, we propose Study Assistant for Large LAnguage Model (SALAM), a novel framework with an auxiliary agent to assist the main LLM in learning from mistakes through interactive cooperation. In the gathering phase, the student assistant agent probes the main LLM, analyzes its errors, and collects the interaction in a mistake memory. During the examination phase, the study assistant provides guidelines by retrieving relevant cases to help the main LLM anticipate and avoid similar errors. We first investigate the effectiveness of a general study assistant and then customize it to provide LLM-specific guidance through imitation learning from successful guidance experiences. Our experiments on three LLMs using two challenging frameworks demonstrate that SALAM can significantly boost LLMs by an accuracy margin of up to 6.6 on BBH and 12.6 on BBQ.",

}

<?xml version="1.0" encoding="UTF-8"?>

<modsCollection xmlns="http://www.loc.gov/mods/v3">

<mods ID="wang-li-2023-learning">

<titleInfo>

<title>Learning from Mistakes via Cooperative Study Assistant for Large Language Models</title>

</titleInfo>

<name type="personal">

<namePart type="given">Danqing</namePart>

<namePart type="family">Wang</namePart>

<role>

<roleTerm authority="marcrelator" type="text">author</roleTerm>

</role>

</name>

<name type="personal">

<namePart type="given">Lei</namePart>

<namePart type="family">Li</namePart>

<role>

<roleTerm authority="marcrelator" type="text">author</roleTerm>

</role>

</name>

<originInfo>

<dateIssued>2023-12</dateIssued>

</originInfo>

<typeOfResource>text</typeOfResource>

<relatedItem type="host">

<titleInfo>

<title>Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing</title>

</titleInfo>

<name type="personal">

<namePart type="given">Houda</namePart>

<namePart type="family">Bouamor</namePart>

<role>

<roleTerm authority="marcrelator" type="text">editor</roleTerm>

</role>

</name>

<name type="personal">

<namePart type="given">Juan</namePart>

<namePart type="family">Pino</namePart>

<role>

<roleTerm authority="marcrelator" type="text">editor</roleTerm>

</role>

</name>

<name type="personal">

<namePart type="given">Kalika</namePart>

<namePart type="family">Bali</namePart>

<role>

<roleTerm authority="marcrelator" type="text">editor</roleTerm>

</role>

</name>

<originInfo>

<publisher>Association for Computational Linguistics</publisher>

<place>

<placeTerm type="text">Singapore</placeTerm>

</place>

</originInfo>

<genre authority="marcgt">conference publication</genre>

</relatedItem>

<abstract>Large language models (LLMs) have demonstrated their potential to refine their generation based on their own feedback. However, the feedback from LLM itself is often inaccurate, thereby limiting its benefits. In this paper, we propose Study Assistant for Large LAnguage Model (SALAM), a novel framework with an auxiliary agent to assist the main LLM in learning from mistakes through interactive cooperation. In the gathering phase, the student assistant agent probes the main LLM, analyzes its errors, and collects the interaction in a mistake memory. During the examination phase, the study assistant provides guidelines by retrieving relevant cases to help the main LLM anticipate and avoid similar errors. We first investigate the effectiveness of a general study assistant and then customize it to provide LLM-specific guidance through imitation learning from successful guidance experiences. Our experiments on three LLMs using two challenging frameworks demonstrate that SALAM can significantly boost LLMs by an accuracy margin of up to 6.6 on BBH and 12.6 on BBQ.</abstract>

<identifier type="citekey">wang-li-2023-learning</identifier>

<identifier type="doi">10.18653/v1/2023.emnlp-main.659</identifier>

<location>

<url>https://aclanthology.org/2023.emnlp-main.659</url>

</location>

<part>

<date>2023-12</date>

<extent unit="page">

<start>10667</start>

<end>10685</end>

</extent>

</part>

</mods>

</modsCollection>

%0 Conference Proceedings %T Learning from Mistakes via Cooperative Study Assistant for Large Language Models %A Wang, Danqing %A Li, Lei %Y Bouamor, Houda %Y Pino, Juan %Y Bali, Kalika %S Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing %D 2023 %8 December %I Association for Computational Linguistics %C Singapore %F wang-li-2023-learning %X Large language models (LLMs) have demonstrated their potential to refine their generation based on their own feedback. However, the feedback from LLM itself is often inaccurate, thereby limiting its benefits. In this paper, we propose Study Assistant for Large LAnguage Model (SALAM), a novel framework with an auxiliary agent to assist the main LLM in learning from mistakes through interactive cooperation. In the gathering phase, the student assistant agent probes the main LLM, analyzes its errors, and collects the interaction in a mistake memory. During the examination phase, the study assistant provides guidelines by retrieving relevant cases to help the main LLM anticipate and avoid similar errors. We first investigate the effectiveness of a general study assistant and then customize it to provide LLM-specific guidance through imitation learning from successful guidance experiences. Our experiments on three LLMs using two challenging frameworks demonstrate that SALAM can significantly boost LLMs by an accuracy margin of up to 6.6 on BBH and 12.6 on BBQ. %R 10.18653/v1/2023.emnlp-main.659 %U https://aclanthology.org/2023.emnlp-main.659 %U https://doi.org/10.18653/v1/2023.emnlp-main.659 %P 10667-10685

Markdown (Informal)

[Learning from Mistakes via Cooperative Study Assistant for Large Language Models](https://aclanthology.org/2023.emnlp-main.659) (Wang & Li, EMNLP 2023)